Vibe Coding Security: Secure AI-Generated Code Before It Ships

AI coding tools like Claude Code, Codex, Cursor, Windsurf, and GitHub Copilot are changing how software is built. Learn how vibe coding security helps teams detect, prioritize, and remediate AI-generated vulnerabilities before production.

AI coding is no longer experimental.

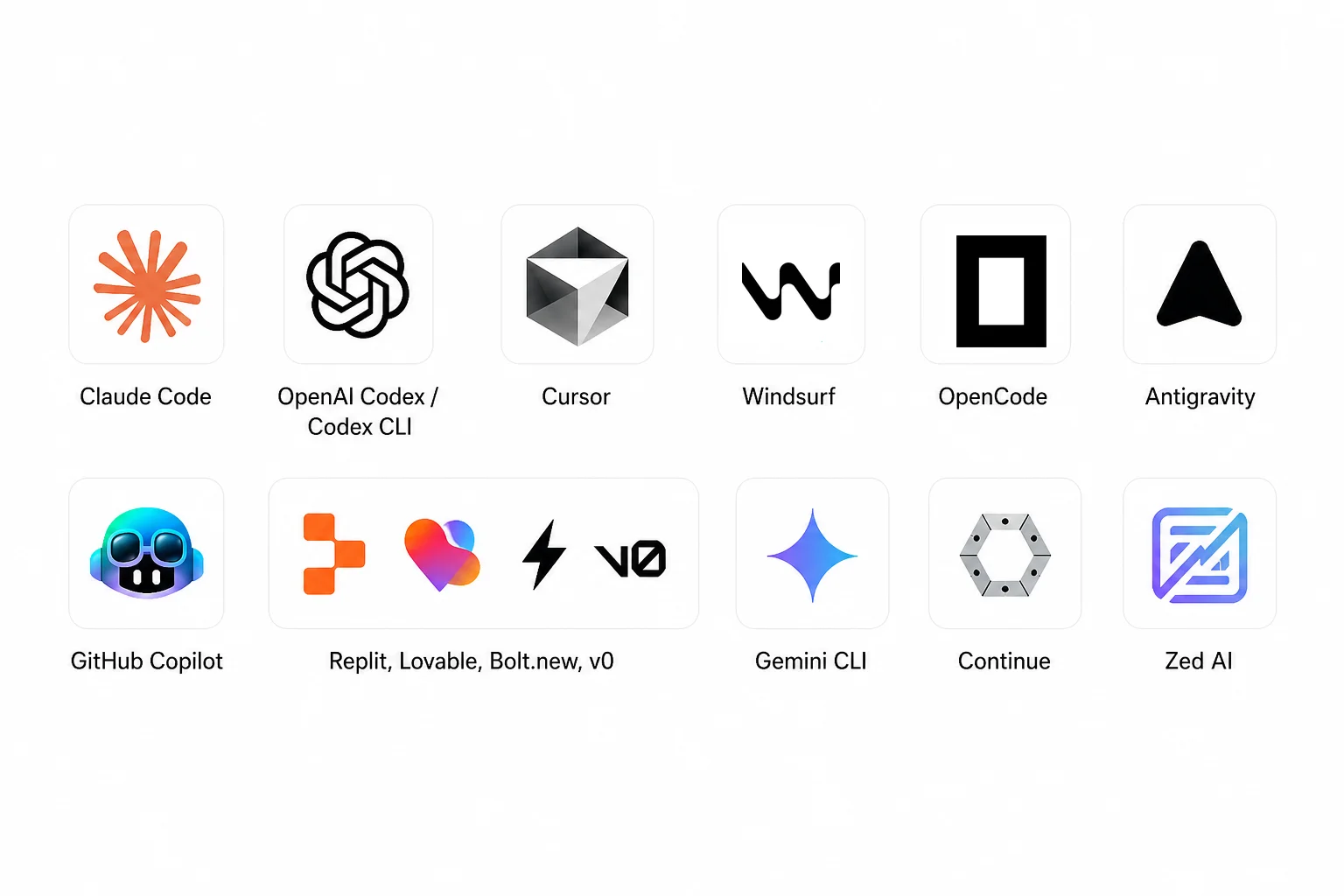

Developers are now using tools like Claude Code, OpenAI Codex, Cursor, Windsurf, OpenCode, GitHub Copilot, Replit, Lovable, Bolt.new, v0, Gemini CLI, Continue, and Zed AI to generate code, edit files, fix bugs, build features, and create pull requests faster than ever.

This new workflow is often called vibe coding — describing what you want in natural language and letting AI generate much of the implementation.

The productivity gain is real. But the security risk is growing just as fast.

Stack Overflow’s 2025 Developer Survey found that 84% of developers use or plan to use AI tools, while GitHub’s Octoverse 2025 reported that more than 1.13 million public repositories now depend on generative-AI SDKs, up 178% year over year. Google Cloud’s 2024 DORA report also found that more than 75% of respondents rely on AI for at least one daily professional responsibility, including code writing and code explanation.

AI is changing how software is built. Now AppSec needs to change how software is secured.

What is Vibe Coding Security?

Vibe coding security is the practice of securing software created with AI coding assistants, AI IDEs, and autonomous coding agents.

It protects teams using tools such as:

| AI Coding Tool | Common Use Case |

|---|---|

| Claude Code | Agentic coding, codebase understanding, file editing, and command execution |

| OpenAI Codex / Codex CLI | Terminal-based coding agent, repository reading, edits, and command execution |

| Cursor | AI-first IDE and agentic development workflow |

| Windsurf | Agentic IDE workflow powered by Cascade |

| OpenCode | Open-source AI coding agent for terminal, IDE, or desktop workflows |

| GitHub Copilot | AI pair programming and code completion |

| Replit, Lovable, Bolt.new, v0 | Fast app generation and prototyping |

| Gemini CLI, Continue, Zed AI | AI-assisted local development |

Claude Code is positioned as an agentic coding tool for working in codebases. OpenAI’s Codex CLI can read a repository, make edits, and run commands from a terminal workflow. Cursor describes agents that turn ideas into code, while Windsurf’s Cascade is described as an agentic AI assistant with code/chat modes, tool calling, checkpoints, real-time awareness, and linter integration.

That means AI coding tools are no longer just autocomplete. They can directly influence production code.

Why Vibe Coding Creates Security Risk

Traditional AppSec was built around a slower development loop:

Write code → Commit → Pull request → Scan → Triage → FixVibe coding changes that loop:

Prompt → Generate code → Accept changes → Run tests → ShipThis is faster — but it creates a security gap.

AI-generated code can look clean, compile successfully, and still introduce vulnerabilities. Common risks include:

- Missing authorization checks

- Broken object-level authorization

- Hardcoded secrets

- Insecure dependencies

- Hallucinated or typosquatted packages

- Unsafe API endpoints

- Disabled row-level security

- Weak authentication logic

- Insecure cloud or infrastructure configuration

- AI-generated fixes that create new issues

The problem is not only that AI can generate vulnerable code. The bigger problem is that AI can generate vulnerable code faster than security teams can manually review and remediate it.

From AI-Generated Code to AI-Native Remediation

Claude Code / Codex / Cursor / Windsurf / OpenCode / Copilot

↓

AI-generated code

↓

Plexicus detects risk

↓

Prioritize by context

↓

AI-native remediation

↓

Verified fixMost security tools still focus on detection.

They scan the repository, create alerts, and push findings into a backlog. That worked when code moved slower. It becomes painful when developers and AI agents are generating code continuously.

In the age of vibe coding, security teams do not need more noise. They need answers:

- Is this AI-generated code actually risky?

- Is the vulnerability reachable?

- Which developer or team owns it?

- What is the safest fix?

- Can the fix be generated automatically?

- Can the remediation be validated before merge?

This is why vibe coding security needs to go beyond scanning. It needs AI-native remediation.

What is AI-Native Remediation?

AI-native remediation helps teams move from finding vulnerabilities to fixing them.

Instead of only saying:

“This code may be vulnerable.”

A better workflow says:

“This function is risky, this is why it matters, this is the recommended fix, and this is how to validate the remediation.”

For AI-generated code, remediation should be:

- Context-aware

- Developer-friendly

- Pull request-ready

- Prioritized by real risk

- Verified after the fix

- Fast enough to keep up with AI coding tools

This is the new AppSec requirement: not just detect faster, but fix faster — and reduce mean time to remediation (MTTR).

How Plexicus Helps Secure Vibe Coding

Plexicus helps teams detect, prioritize, and remediate vulnerabilities across the software development lifecycle with AI-powered security automation.

For teams adopting Claude Code, Codex, Cursor, Windsurf, OpenCode, GitHub Copilot, Replit, Lovable, Bolt.new, v0, and other AI coding tools, Plexicus adds the missing security layer.

With Plexicus, teams can:

- Detect vulnerable AI-generated code early

- Find secrets, insecure dependencies, and risky APIs

- Prioritize vulnerabilities based on real risk

- Reduce alert noise and duplicate findings

- Generate actionable remediation guidance

- Support developers inside modern workflows

- Shorten mean time to remediation

- Secure applications from code to cloud

The goal is not to slow down AI coding. The goal is to make AI coding safe enough for production.

Vibe Coding Security Checklist

Use this checklist if your team is adopting AI coding tools:

| Question | Why It Matters |

|---|---|

| Are developers using Claude Code, Codex, Cursor, Copilot, or other AI coding tools? | You need visibility into where AI-generated code enters the SDLC. |

| Are AI-generated dependencies scanned? | AI tools can suggest vulnerable, outdated, or hallucinated packages. |

| Are secrets detected before commit? | AI-generated examples can accidentally include tokens or unsafe config. |

| Are authorization flaws tested? | AI-generated endpoints often miss ownership and tenant checks. |

| Are findings prioritized by real risk? | More AI-generated code can mean more alerts — context matters. |

| Can fixes be generated or recommended automatically? | Manual remediation cannot keep up with AI-speed development. |

| Can fixes be validated before merge? | AI-generated fixes need verification, not blind trust. |

If the answer to most of these is “no,” your organization may be adopting AI coding faster than it is securing it.

Conclusion

Vibe coding is changing software development. Developers are using Claude Code, Codex, Cursor, Windsurf, OpenCode, Copilot, and other AI coding tools to build faster. But faster code creation also means faster vulnerability creation.

Traditional AppSec cannot rely only on late-stage scanning and manual remediation anymore. The new rule is simple:

Secure AI-generated code before it ships.

Plexicus helps teams detect, prioritize, and remediate vulnerabilities across the SDLC, so organizations can adopt AI coding without letting security fall behind.

Book a demo with Plexicus and see how AI-native remediation works in your pipeline.

Want to go deeper on the remediation side? Read: AI-Native Remediation for Vibe Coding Security

FAQ

What is vibe coding security?

Vibe coding security is the practice of securing software created with AI coding assistants, AI IDEs, and autonomous coding agents. It covers detection, prioritization, and remediation of vulnerabilities in AI-generated code before they reach production.

Which tools are used for vibe coding?

Common vibe coding tools include Claude Code, OpenAI Codex, Cursor, Windsurf, OpenCode, GitHub Copilot, Replit, Lovable, Bolt.new, v0, Gemini CLI, Continue, and Zed AI.

Why is AI-generated code risky?

AI-generated code can introduce missing authorization checks, hardcoded secrets, insecure dependencies, hallucinated packages, unsafe APIs, weak authentication logic, and insecure cloud configuration — often faster than security teams can catch them manually.

Is vibe coding security different from traditional AppSec?

Yes. Traditional AppSec often scans after code is written. Vibe coding security focuses on securing code closer to the moment it is generated, using shift-left principles combined with AI-native remediation.

How does Plexicus help with vibe coding security?

Plexicus helps teams detect, prioritize, and remediate vulnerabilities across the SDLC using AI-powered security automation — scanning code, dependencies, secrets, APIs, and cloud configurations generated by AI coding tools.